Nope, there’s no perinatal mortality surge from Fukushima fallout

by Will Boisvert

Another day, another glop of radiophobic junk science.

The latest comes from biostatistician Hagen Scherb, a prominent anti-nuclear researcher at the prestigious Helmholtz Institute in Munich, Germany. His team specializes in eye-glazing statistical analyses that link Chernobyl radiation to vast increases of disease and death in infants and fetuses all over Europe.

Now he’s weighing in on the Fukushima accident. In a recent article in the peer-reviewed journal Medicine, he and his co-authors claim that a surge in perinatal mortality—stillbirths of fetuses after 22 weeks gestation plus deaths of newborns up to seven days after birth—erupted in Japan after the March, 2011 nuclear accident. The study finds that in “6 severely radioactively contaminated prefectures…we observed distinct long-term increases in perinatal mortality of approximately 15 % from January 2012 onward.” [1]

Health threats to children in Fukushima—like the alleged juvenile thyroid-cancer outbreak—have been a major theme of alarmist claims since the accident. And like the thyroid-cancer scare, they have been thoroughly debunked. [2] Scherb’s new claim is no different. Once we take a closer look at it, the perinatal mortality surge proves to be a mirage ginned up from cherry-picked statistics, inappropriate comparisons and special pleading—and a deliberate burial of important evidence that contradicts Scherb’s hypothesis.

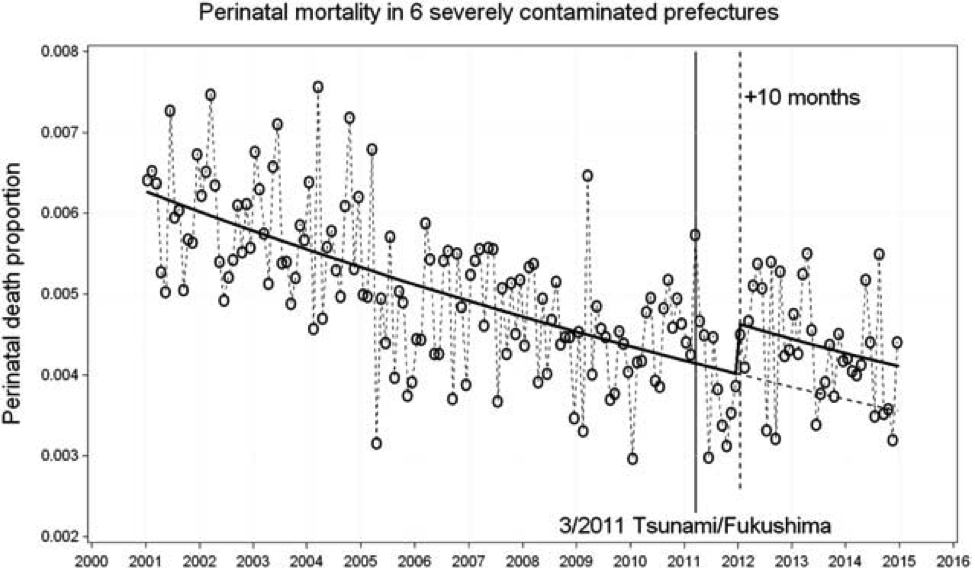

Here’s the paper’s smoking-gun graph:

Figure 3. Monthly perinatal mortality in 6 severely contaminated prefectures Fukushima, Gunma, Ibaraki, Iwate, Miyagi, and Tochigi; jump in January 2012, jump odds ratio 1.156 (1.061, 1.259).

If you’re wondering how Scherb et al estimated the black trend line, with its ominous upward kink, through this noisy, wavering cloud of monthly mortality data, the “change-point analysis” described in the incomprehensible statistics section probably won’t help. But even if we accept it, there are several awkward problems here for the paper’s hypothesis linking it to Fukushima fallout.

First, the alleged mortality spike didn’t kick in until ten months after the accident, in January 2012. Indeed, Scherb’s data show that perinatal mortality in his six “highly contaminated” prefectures dropped 7.9 percent in 2011, the year of the accident and of maximal radiation exposures, compared to 2010. Scherb explains this time lag by “the superposition of the periods necessary for the dispersal of the radioactivity (several weeks) and the pregnancy length;” in other words, it would take a while, perhaps ten months, for the Fukushima fallout to spread and damage pregnancies, and then for these damaged pregnancies to terminate and show up in perinatal mortality stats. But then he notes that “the duration of pregnancies at elevated risk of adverse perinatal outcome may be considerably shorter than the usual 9 months”—a point that contradicts the time-lag finding since pregnancies as short as 22 weeks should have factored into the perinatal mortality surge well before the 10-month lag.

Second, the shape of the post-Fukushima trend line doesn’t make sense if fallout is the cause. In Scherb’s graph the black-line trend after the January 2012 spike roughly parallels the dotted-line comparison. (Scherb’s 15 percent increase in mortality means that the declining black trend-line is shifted about 15 percent higher than the declining dotted comparison trend-line that he extrapolated from pre-2012 statistics.) The shape of the black line implies that the radiation effect elevated perinatal mortality rates by a constant amount for years, without wearing off over time. But that’s not how Fukushima fallout works, because that radioactivity cleared quickly from the environment and human exposures plunged accordingly. The 2014 perinatal cohort, conceived from April 2013 to July 2014, would have been exposed to much less Fukushima radiation than the 2012 and 2013 cohorts. (Ambient radiation levels from fallout in April 2013 had declined by two thirds from levels in April 2011.) [3] So the black trend line after January 2012 should slope sharply downward towards a convergence with the dotted comparison line, something like this:

But in Scherb’s trend-lines there is no convergence—which suggests that Fukushima radiation didn’t cause elevated perinatal mortality rates.

Third, there’s the problem of what else might have caused a perinatal mortality surge besides Fukushima fallout. (One plausible candidate is increased smoking and drinking by expectant moms trying to settle their nerves amid all the scare-mongering about radiation.) But Scherb et al took no account of alternative causes. Gerry Thomas, a medical professor at London’s Imperial College who has done extensive work on the health effects of Chernobyl radiation, points out that “this is yet another ecological study that just associates statistics with an event but provides no evidence that the event was causative. There is a problem with all of these studies in that they do not allow for confounders—many things affect perinatal mortality, and as far as I am aware low dose radiation is not one of them.”

In short, the paper’s finding of a mortality surge linked to Fukushima radiation raises more questions than it answers. But there’s a deeper question: was there any surge at all?

To investigate that I looked at some very pertinent data that Scherb and his coauthors chose not to present: the perinatal mortality stats in Fukushima prefecture proper. Fukushima had much higher radiation levels from fallout than any other prefecture, so if there was a mortality surge it should show up there most clearly.

But when we look at perinatal mortality in Fukushima in the years after the nuclear accident, we find the opposite—a marked decrease in mortality. To filter out the statistical noise from monthly data, I looked at yearly mortality data for Fukushima prefecture during the four years before (2007-10) and the four years after the accident (2011-4) [4]:

Perinatal mortality rate in Fukushima prefecture, deaths per 1000 live births. The blue lines mark average rates during 2007-10 and 2011-14.

When we look at Fukushima proper, the picture looks very different from the one in Scherb’s paper. In 2011, when most newborns would have spent part or all of their gestation under the fallout, there was a sharp drop in perinatal mortality from 4.6 deaths per thousand live births in 2010 to 3.6 in 2011, a decline of 22 percent. The rate rebounded in 2012, but only to 4.6, the same rate as in 2010 when there was no contamination.

The rate rose again in 2013 to 5.3, the same as in 2008. But it’s hard to attribute that increase to radiation, because 2013’s perinatal cohort, conceived from April 2012 through July 2013, would have been exposed to much less radiation than the 2011 and 2012 cohorts because of rapidly declining radiation levels throughout the prefecture and because of evacuations from the highly contaminated exclusion zone in 2011. (Radiation levels in prefectural capital Fukushima City had fallen by over half in April 2012 from levels in April 2011.) [5] Also, leaps that size are not uncommon in the data without nuclear accidents: the mortality rate jumped from 4.5 in 2007 to 5.3 in 2008. Finally, the mortality rate plunged again in 2014, to 3.4.

Multi-year averages show a substantial downward shift in perinatal mortality in the post-accident period. Below is a table showing how two-year and four-year averages of mortality rates declined in Fukushima. Declining perinatal mortality is expected because of healthier lifestyles and improving medical care. But did Fukushima mortality decline as fast as it would have without the radiation from the accident, which is the Scherb paper’s core metric? The answer is yes when we compare Fukushima with uncontaminated areas of Japan. In the table I’ve included data for a comparison group of 38 prefectures that the Scherb paper pegged as having no significant contamination. The numbers show that mortality declined a little faster in Fukushima after the accident than in uncontaminated prefectures, even for the heavily contaminated 2011-2012 cohorts.

Decline in perinatal mortality rates (deaths per 1000 live births) averaged over two- and four-year periods before and after the nuclear accident, in Fukushima prefecture and in Scherb’s 38 uncontaminated prefectures.

None of this Fukushima data fits with Scherb’s claim of an uptick in perinatal mortality caused by Fukushima fallout. What it shows is random fluctuations that don’t correlate well with radiation exposures, on top of an overall downward trend in mortality after the nuclear accident.

You would think that data from Fukushima prefecture would be front and center in any study of perinatal mortality effects from the nuclear accident. But that data doesn’t tell an alarmist story.

Instead of spotlighting it, Scherb and his coauthors obscured this crucial data by aggregating it with statistics from five other “high contamination” prefectures. And here’s not just wrong but improper, because the five other prefectures were not highly contaminated. Radiation exposures in the other prefectures were many times lower than in Fukushima proper. This makes Scherb’s association between high contamination and high mortality even more suspect.

Scherb’s method of selecting high contamination prefectures is very odd: “Six prefectures—Iwate, Miyagi, Fukushima, Ibaraki, Tochigi, and Gunma—were classed as ‘severely contaminated;’ they include wide areas in which the radiation dose in the air was higher than 0.25 micro-Sieverts per hour, according to a map documenting estimates radiation doses as of December 2011.” So the assignment of contamination categories seems to have been based solely on a subjective judgment of the geographical air-dose rates on a single map with no attempt to correlate the radiation levels with populated areas or do any other dose assessment.

More serious dose assessments show how misleading this grouping is. According to the UN Scientific Committee on the Effects of Airborne Radiation, first-year doses to infants—the best comparison group for the perinatal cohort—in Fukushima Prefecture outside the evacuation zone were three to five times higher than in the other five prefectures.

Source: UNSCEAR [6]

Infants from the Fukushima evacuation zone got even higher first-year doses, up to 9.3 millisieverts, [7] more than 18 times higher than the average dose to infants in Iwate prefecture.

So the right description of Iwate and the other four prefectures apart from Fukushima is not “severely contaminated” but “barely contaminated.” Doses in these prefectures were smaller than normal variations in natural background radiation—levels that have never been found to cause ill health outside the alarmist fringes of radiation ecology.

This mismatched “severely contaminated” category has crucial implications for the “dose-response relationship,” the central concept in any epidemiological study. Put simply, an increased dose of the putative cause should yield an increased response in the incidence of the disease: three-pack-a-day smokers should have a larger increase in lung-cancer rates than one-cigarette-per-day smokers.

The effect of Scherb’s improper grouping of Fukushima with five other prefectures in a single dose category was to imply a positive dose-response relationship that in fact did not exist. Once the prefectural data are disentangled, the dose-response relationship disappears. Perinatal mortality rates in severely contaminated Fukushima declined substantially after the accident, in line with uncontaminated comparison prefectures; it’s only the five other prefectures, with much lower contamination, that saw an uptick in relative mortality rates.

When a larger radiation dose leads to a smaller mortality response, it’s hard to argue that radiation is causing the mortality. As we saw above, the relationship between Fukushima fallout and perinatal mortality over time doesn’t show a dose-response relationship: periods of higher contamination don’t align with upticks in relative mortality. And that’s also true over geographical space once we disaggregate Scherb’s bogus “severely contaminated” dose category.

The flaws in this paper are ubiquitous in Scherb’s radiation studies: cherry-picked data-sets; unjustified and misleading dose categories that lump apples with oranges; mismatched statistical aggregates that obscure more than they reveal; a glossing-over of confounding factors; ad hoc rationalizations when the numbers still don’t fit; a brisk sweeping of contradictory data under the rug. Scherb gets away with all this because the forbiddingly complicated statistical apparatus he uses to extract tsunamis of risk from tiny ripples in the data overawes reviewers and distracts them from glaring flaws in the study design.

That’s a growing problem in the deeply politicized field of radiation epidemiology. As massive health databases become more accessible and software makes it easier to mine them for spurious correlations, elaborate statistical workups are increasingly used to rationalize dubious findings rather than weed them out. As the work of Scherb and like-minded radiation alarmists demonstrates, the result is a latter-day version of astrology. Just as ancient astrologers connected random stars into figures of gods and monsters, Scherb connects random data-points into imaginary pictures of life-threatening radiation.

Unfortunately, Scherb’s brand of astrology has real-world consequences. By contributing to the demonization of nuclear power, it helps obstruct one of the world’s most important sources of clean energy.

1. Scherb et al, “Increases in perinatal mortality in prefectures contaminated by the Fukushima nuclear power plant accident in Japan: A spatially stratified longitudinal study.” Medicine; 2016 Sep; 95(38). https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5044925/pdf/medi-95-e4958.pdf

3. http://radioactivity.nsr.go.jp/en/contents/1000/496/24/1304941_041519.pdf ; http://radioactivity.nsr.go.jp/en/contents/7000/6646/24/192_1_0416.pdf

4. Table 8-13, “Trends in perinatal death rates by each prefecture: Japan” http://www.e-stat.go.jp/SG1/estat/ListE.do?lid=000001101179 ; http://www.e-stat.go.jp/SG1/estat/ListE.do?lid=000001137968 ; http://www.e-stat.go.jp/SG1/estat/ListE.do?lid=000001101887

5. http://radioactivity.nsr.go.jp/en/contents/1000/496/24/1304941_041519.pdf ; http://radioactivity.nsr.go.jp/en/contents/1000/238/24/192_1_15_120416.pdf

6. UNSCEAR 2013 Report. Volume I: Report to the General Assembly. Scientific Annex A: Levels and Effects of radiation exposure due to the nuclear accident after the great east-Japan earthquake and tsunami. P. 55.

7. Ibid., p. 57.